Thank you for the introduction. I first want to say what an honour it is to be at the European Parliament’s Science and Technology Options Assessment (STOA) Annual Lecture, allowing me to speak in the company of eminent MEPs, the Commissioner and scientists, as well as my industry peers.

Since the advent of the World Wide Web some thirty years ago, five American tech giants have come to dominate our lives online: Google, Apple, Facebook, Microsoft and Amazon (GAFMA). Thanks to these brands – which are among the most recognized in the world – it has never been easier to gain access to information and services. Google helps us to find the answer to nearly any question, archive our e-mails, and share video content through YouTube. Through Facebook we have access to a global network of friends. We can have virtually anything delivered to our doorsteps from Amazon. And Apple and Microsoft allow us to do this from the palm of our hands. These companies have changed our lives.

At the same time, many people are asking themselves: what is the impact of just a handful of companies with such far-reaching influence on society? A few weeks ago, several US law makers publicly released a selection of Facebook ads bought by Russian operatives who tried to sow division among American citizens.1 And it has been known for a while that Google search results can be manipulated, in some cases to serve an extremist political agenda.2

With more articles being published online than ever, discerning good information is also harder than ever. This makes bad information look more valid because it is competing as effectively as good information in the snowstorm of bits and bytes that comes at us every day. Some even find that services that depend on clicks for revenue tend to downplay information that may be rock solid and important, but which delivers fewer clicks than questionable information that triggers reactions.3 I would like to challenge my fellow panelists4 by stating that the tech giants have a responsibility in this – and that they may look at the publishing industry for some inspiration.

What is the purpose of publishers in this new world?

Publishers have an important role to play in stopping the spread of misinformation and fake news. We acquire content and in the past twenty years we have increasingly moved it to platforms online, much like a tech company does. Speaking from my experience as a science publisher at Elsevier, we can guarantee that the material we produce adheres to the international standards of scholarship. It has been edited, peer-reviewed and validated. In the process it has been revised and revised again to further improve the quality. Most importantly, it is carefully curated so that it remains accessible – and citable! – in the future. In other words, we take responsibility for the content we produce.

That does not mean we do our work perfectly. After all, scientific publishing companies – as well as our counterparts in trade and educational publishing – are run by humans. Even after strict peer review the occasional article will slip through and is published though it should not have been. Luckily there are strict procedures in place to deal with such articles, e.g. through a corrigendum or erratum.

We also see that publishers are rapidly embracing technology. Today digital technology does not only make our day-to-day work easier, but it also helps in further improving the quality of the content we publish. The Internet, and later the World Wide Web, were great strides forward in providing access to content, as well as structuring it. But with the advent of data mining technologies in recent years and even more recently machine learning and AI, we are finally able to digest content in other ways besides reading. To put this differently, until now as a result of a search for information, publishers would provide a selection of documents (like articles, book chapters) and the reader would use her human intelligence to extract the knowledge out of this set of documents. AI, however, allows us to extract the knowledge out of articles by applying, for example, knowledge graphs. But it should be noted here that publishers deploy machine learning and AI with strict editorial responsibility.

A strong example of how this may benefit the end user is Elsevier’s ClinicalKey, which presents the latest medical content in an easily digestible way. This enables a doctor in the emergency room to quickly access key medical guidelines as an added check or refresher when having to make decisions under pressure. That potentially saves lives. In a profession where time is a luxury, and a badly informed decision could mean the difference between life and death, it is essential that the information a doctor consumes is correct. As stewards of truth, publishers can guarantee that their content is not only trustworthy, but presented effectively as well.

While publishers deploy data mining to extract curated information, and self-improving algorithms ensure more accurate results, publishers’ services and reports are always a combination of technology and human intelligence. We are acutely aware that this development cannot be at the expense of quality, and trust in our publications and services is and will remain a top consideration moving forward.

And talking about trust, there are several developments which we monitor with a certain level of concern. In recent years publishers have been making more and more content available to the public, either through Open Access journals and platforms, or through preprint servers. Improving access is an excellent development, but this shift has a dark side too. We are very concerned about scientific articles published in predatory OA journals.

And what if a physician relies on medical information uploaded to a preprint server, a version of the article without any quality assessment whatsoever? And if we increasingly rely on AI algorithms, would we need a peer review process to assess these algorithms? Should there even be a level of transparency of the AI technology used? If we value the quality of our content, publishers will need to proceed with care.

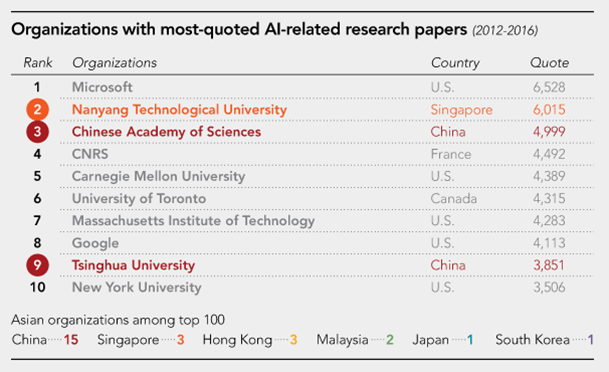

Earlier this year, Elsevier, as a Big Data company, worked together with the Japanese news source Nikkei in ranking organizations with the most frequently-cited research papers about AI – a measure of the impact of research. This information was generated from our Scopus database, which collects and structures citations from millions of journal articles.

While the Top 10 of AI institutes is dominated by American organizations, including the tech giants Microsoft and Google, perhaps surprisingly, 15 out of the top 100 AI organizations are based in China (and only one from Japan – this came as a profound shock to the Japanese audience).

Image from Michiel Kolman’s presentation

The top 3 European organizations involved in AI research are all French: CNRS, Saclay and INRIA. Other European institutes in the Top 25 are Imperial, Oxford, ETH and UCL. The total number of European institutions that made the top 100 amounts to 30, compared to 34 North-American, and 25 Asian institutions (excluding the Middle-East): in other words, Europe is certainly on par with Asia and America in AI research.

Image from Michiel Kolman’s presentation

To conclude: it could be argued that tech companies are more and more becoming like publishers – presenting content to the public in the best way possible – while publishers are increasingly transforming into tech companies, relying on data mining and AI technologies to extract knowledge out of content. Rather than single-purpose companies, we, publishers and tech companies alike, have become hybrid organizations with multi-purpose agendas. If we aim to continue to serve humanity and solve our grand challenges, we need to balance content and technology with all stakeholders. That is why I call on greater collaboration between publishers and technology companies to help keep our checks and balances in place. Thank you.

4 At the lecture, these included:

the MIT Media Lab’s Michail Bletsas,

Google’s Jon Steinberg,

Facebook’s Richard Allan,

and Andreas Vlachos of the University of Sheffie